Visual Studio 2005 and later versions have code coverage feature built-in – only available in team edition. However a standalone profile is available to download from Microsoft site. Code coverage is a great help for programmer’s confidence on the code. It is not possible to test all possible execution path- however, if a test executes 90% code lines, the chance of bugs becomes quite small.

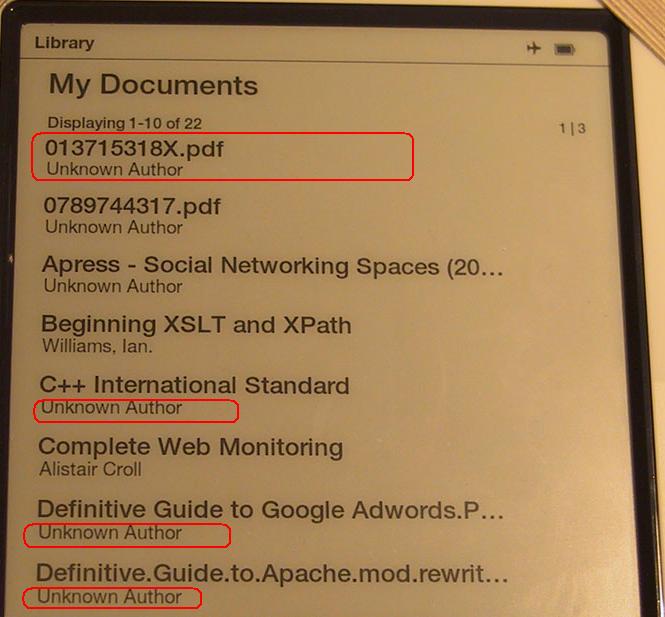

Our project utilizes a different process, which can’t be managed entirely with GUI. To begin with, we manage our unit tests with boost.test, with each test file built into an executable file. In large projects we have hundreds of test files, and managing them through GUI interface is quite formidable.

Another issue is the frustration with displaying metrics. The interface provided is quite clunky, and you have to navigate thousands of functions and class methods in order to find coverage for the method you are focusing. The color display in the editor has a lot for improvement.

I recently investigated possibilities to collect and analyze code coverage data programmatically. It was a success. I noticed that the documentation on this aspect is quite weak, so I’d like to share some points with readers.

Collecting Data

In order for the code coverage to be collected, the executable must be instrumented. Some web pages I found like this state that link flag –PROFILE is required, while others not mentioning this requirement. In our build system, this link flag triggers the instrumentation. The build system we are using is boost.build, we changed the file msvc.jam slightly to launch instrumentation process once <linkflags>-PROFILE is passed.

Visual Studio documentation calls those related tools “Performance Tools” and they are located at C:\Program Files\Microsoft Visual Studio [Version]\Team Tools\Performance Tools directory. It is recommended to add this path into PATH variable in vcvars.bat so that you gain the access to the command after entering command prompt.

To instrument the executable, run the command:

vsinstr.exe <YOUR_EXE> /COVERAGE

As I stated, this process is added into our build system automatically – if <linkflags>-PROFILE is found, the executable will be instrumented.

Now the second step is to collect data. To collect data you first start vsperfmon process. Because you can run multiple executables in one session, this step is now carried out manually:

start VSPerfMon /COVERAGE /OUTPUT:<REPORT_FILE_NAME>

Here we use start command to open a separate console, because execution will block the current console.

Now run the tests. Code coverage data will be collected.

After we have done testing, shut down the vsperfmon process:

vsperfcmd –shutdown

After this command is carried out, the coverage data is stored in the file we specified. The coverage file is in a proprietary format with no documentation about its structure. Fortunately, MS allows us to export using Visual Studio or calling code analysis API.

Converting to XML

You can drop the coverage file newly created into Visual Studio. You can not do much with its interface, as you have to navigate many functions to locate the ones you wanted to view. Furthermore, it does not code coverage ratio on source file basis, but rather on method basis.

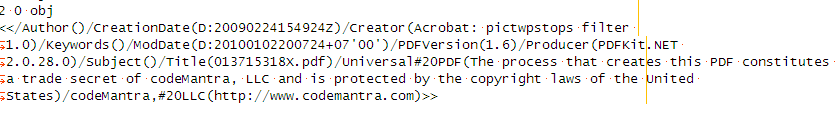

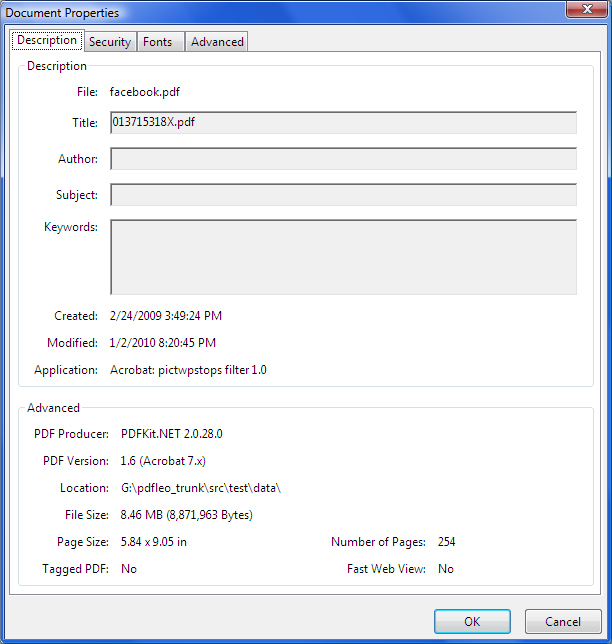

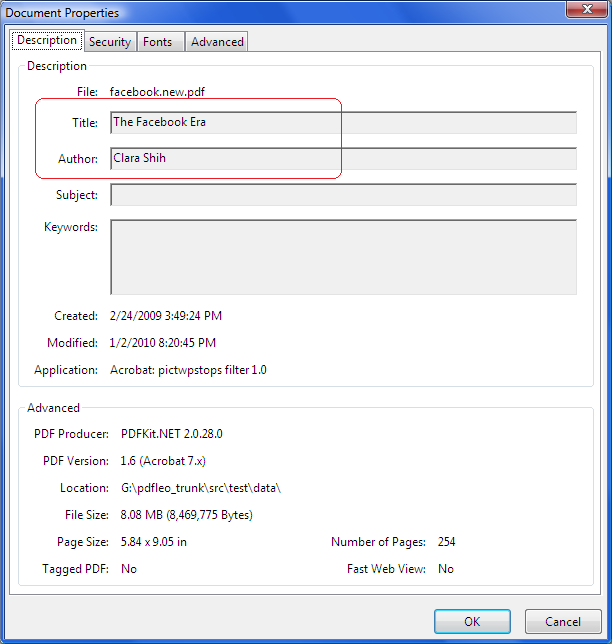

You can export the XML file from Visual Studio, or use the code I provided below to export programmatically. Oddly the two give different results – the one exported from Visual Studio is encoded in UTF-16 and with no line endings – I spent a quite a bit time to convert it into a UTF-8 with line endings so that my favorite editor can open it. It looks to me that Visual Studio uses a different method to export, and it might be written in native C++.

The programmatic way that Microsoft wants us to use is through assembly. There are several MSDN blogs on this topic; unfortunately the one I found contain two errors: it failed to point out that symbol path must be set, and the WriteXML call was wrong. I posted my code below:

using System;

using System.Collections.Generic;

using System.Text;

using Microsoft.VisualStudio.CodeCoverage;

namespace coverdump

{

class Program

{

static void Main(string[] args)

{

if (args.Length != 3)

{

Console.WriteLine("Usage: coverdump coverage xml symbolpath");

return;

}

String coveragepath = args[2];

CoverageInfoManager.SymPath = coveragepath;

CoverageInfoManager.ExePath = coveragepath;

// Create a coverage info object from the file

CoverageInfo ci = CoverageInfoManager.CreateInfoFromFile(args[0]);

// Ask for the DataSet. The parameter must be null

CoverageDS data = ci.BuildDataSet(null);

// Write to XML

data.WriteXml(args[1]);

}

}

}

I had to overcome some issues not mentioned elsewhere. The first issue is references. You must add reference to two files: Microsoft.VisualStudio.Coverage.Analysis.dll and Microsoft.DbgHelp.manifest. Both are located under C:\Program Files\Microsoft Visual Studio [Version]\Common7\IDE\PrivateAssemblies. When I first ran the code, it complained that the assembly does not match the one requested, and I found that the file Microsoft.DbgHelp.manifest does not match dbghelp.dll. I do not know if the initially not match, or just because a subsequent update. Anyway I have to update the version number as well as remove publicKey attribute in order for the program to run properly.

The third argument is the symbol path and executable path. You can specify multiple paths separated by semicolon. If you work with Visual Studio 2010, the interface changed a little bit as the property SymPath and ExePath are now string arrays.

It is a little bit odd to ask for symbol path, considering that the fact that Visual Studio does not ask for it when loading a coverage file. In other words, the coverage file must contain the path to EXE (and PDB file name can be found in EXE for debug build).

Analyzing Data

PHP is my favorite script language, and I use it to analyze the data. As the first step, my goal is display the code coverage ratio for each source file specified at command line, also produce a code diff that our programmers can view subsequently. This is much better than what we did previously – programmers have to navigate thousands of methods before they can locate useful information. From project management perspective, now we are able to view code coverage ratio on source file basis.

The resulted XML file can be very big – or even huge. The first one I got was 200MBytes out of a mere 15M coverage file. If you load all the file into memory the process can take quite a while. In light of this issue, I choose XMLReader class to read the file – XMLReader reads XML file as a stream. I read all <Method> elements as well as all <SourceFiles> elements.

In order for programmers to view the difference, my script creates another file based on line coverage data. If the code is not covered, it writes a blank line into the file. After all lines are written, the script calls diff –u 100 to produce a diff file between the original and the new source file. The option –u 100 tells the diff to display 100 lines context, which basically produces a diff file containing all original source lines.

In the future we might expand the script to do more reporting. XSLT is an interesting option here.